Build Artifacts

In BuildMaster, build artifacts are files that you intend to deploy/release. They're generally added to a build after building/compiling your code, but they can also be imported from a CI server like Jenkins or manually uploaded.

BuildMaster also supports Package and Container-based Artifacts; these are essentially references to content that's been published to a ProGet feed, like NuGet packages, PyPI packages, and Docker container images.

Browsing Artifacts

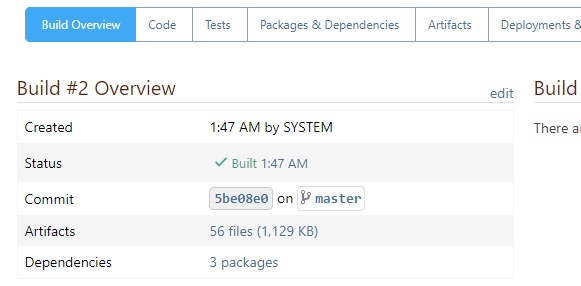

Build artifacts are an integral part of a build, you can easily browse these artifacts from the Build Overview page:

When a build has no artifacts, then a warning will be displayed.

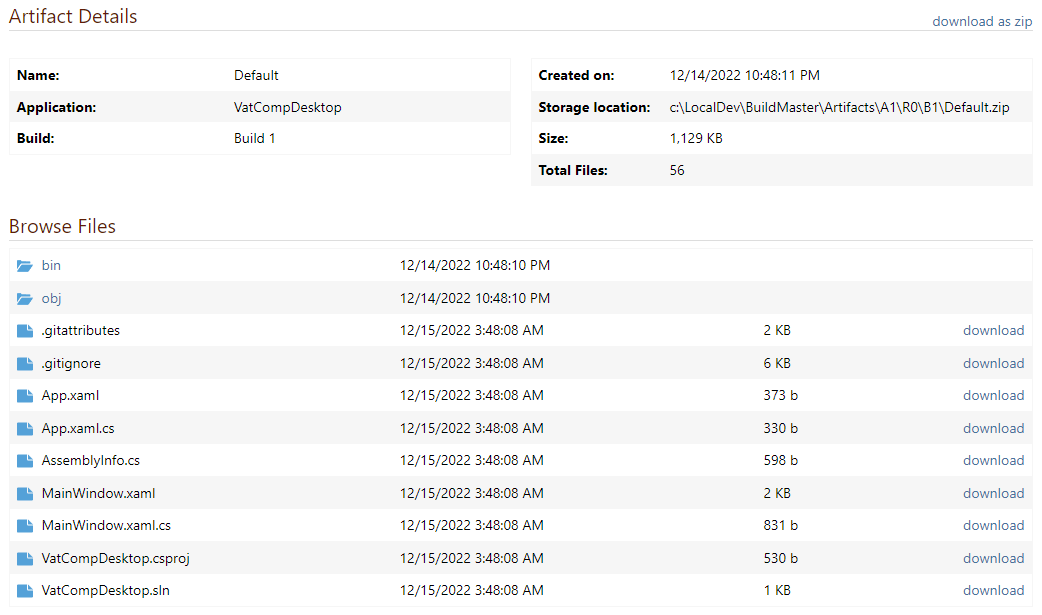

The Artifacts navigation tab of a build will show you when the artifact was added, as well as allow you to browse or download individual files.

You can browse all artifacts in BuildMaster under Administration > Build Artifacts.

Adding Artifacts to a Build

There are three ways to add artifacts to a build: Create-Artifact operation, Import-Artifact operations, and manually upload.

All three methods work the same: the artifacts will be compressed in a .zip file, saved to disk, and associated with the build. The zipped artifacts file will be named Default, but you can specify a different name.

You can add multiple artifacts files to a build, as long as they have a different file name. This can be useful when you want to deploy different components of an application, like a Web App, a Windows, a Database, etc.

Build artifacts aren't limited to deployable components; some users have found artifacts ideal for storing compliance or specialized diff reports generated during the build process.

Create-Artifact Operation

The most common way to add artifacts to a build is by running the Create Artifact operation in a build script. Build script templates will use this operation behind the scenes, but if you're writing your own script, you can simply run it without any parameters:

Create-Artifact;

Without parameters, this operation collects files in the working directory, compresses them in a zip file named Default, and adds the artifact to the build.

Capturing Different Files: Subdirectory and Filemasks

By default, Create-Artifact will capture all files from the working directory ($WorkingDirectory). However, you'll usually only want a subset of those files, such as those within the build output folder.

You can use the From parameter to capture only a subdirectory, for example:

Create-Artifact

(

From: src/bin/release

);

You use the Include and Exclude parameters to further control the files that will be captured in the artifact. These parameters follow our wildcard masking rules, which provide a lot of flexibility in the files you want to capture.

For example, you could capture two different sets of artifacts—one for regular deployment and one for debugging purposes—by using different artifacts file names, subdirectory, and mask parameters.

Create-Artifact MyApp

(

From: src/bin/release,

Include: @(**.dll)

);

Create-Artifact MyAppWithDebug

(

From: src/bin/release,

Include: @(**.dll, **.pdb, **.xml)

);

The Exclude parameter can be useful for removing configuration files that you'll later deploy. For example:

Create-Artifact

(

Exclude: web.appSettings.config

);

Create-Artifact MyAppWithDebug

(

From: src/bin/release,

Include: @(**.dll, **.pdb, **.xml)

);

Empty Artifacts File Warning

If no files were captured by Create-Artifact, an empty artifacts file will still be created—but there will be a warning in the logs:

WARNING: There were no files or directories captured in this artifact.

You can override this by setting the IgnoreEmptyArtifact parameter to True.

Artifacts File Already Exists Error

If you try to add an artifacts file to a build, but a file with that name already exists then the script will raise an error.

ERROR: An artifact named "Default" already exists for this build.

This is to prevent accidently overwriting artifacts in complex scripts, but it may come up if you're rerunning a build script. The best way to resolve this error is by deleting the existing artifacts files.

You can override this by setting the Overwrite parameter to True.

Import-Artifact Operations

Artifacts can also be imported from a CI server like Jenkins or TeamCity. This is typically done with an Import Artifacts script template, but if you're writing your own script, you can simply run the appropriate operation without any parameters:

Jenkins::Import-Artifacts;

When you don't specify any parameters, the operation will attempt to download artifacts from the associated CI server build. You can also specify parameters to filter what CI server artifacts are imported, such as:

Jenkins::Import-Artifacts

(

Include: @(**.dll, **.pdb, **.xml)

);

If you need to capture items from a subdirectory, or combine artifacts from multiple CI server projects, you can use a combination of the «ci-server»::Download-Artifacts and Create-Artifact operation.

To learn more, see our Importing from CI Servers (Jenkins, TeamCity) documentation.

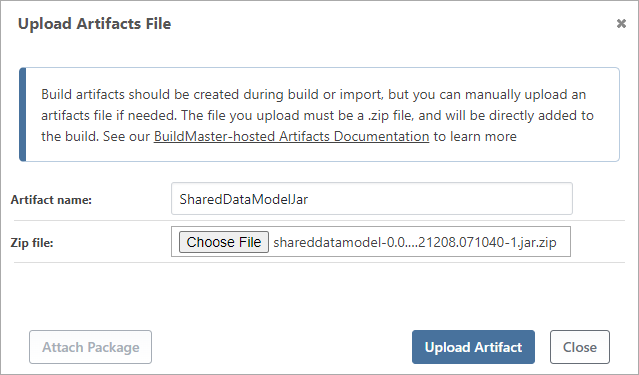

Manually Upload

Build artifacts should be created by a build or import script but you can manually upload if needed.

For example, if the script is not creating the artifact properly and you don't have time to debug, you can manually upload an artifact by navigating to the Artifacts navigation tab of a build, then clicking manually add.

Note that you'll be uploading a single artifacts file, i.e. a .zip file that contains the artifacts you'll want to deploy later.

Deploying Artifacts to Servers

Once you've added artifacts to a build, you can deploy them to servers by using a deployment script.

If you're not using script templates and you're writing your own script, you'll use the Deploy Artifact operation for this. You can use it without any parameters but it's common to at least specify a target directory.

Deploy-Artifact

(

To: d:\WebApps\MyApp;

);

When run in this manner, Deploy-Artifact will synchronize the contents of the artifacts file and target directory (d:\WebApps\MyApp in the example above) by performing an incremental transfer.

Incremental/Optimized Transfer

To reduce deployment time, the Deploy-Artifact operation can use an incremental deployment approach that will copy only the files that changed. A file is considered to be changed if the file size or last-modified-on date is different.

If you've used Microsoft's robocopy before, this is similar to how the /mir command behaves.

Here's how the logic works.

- Files in the target directory that are not in the artifacts file will be deleted. For example, if you removed

resources\logo.jpgfrom your source control and created an artifacts file without that file, the file would be removed from the target (e.g.d:\WebApps\MyApp\resources\logo.jpg) - Files not in the target directory but in the artifacts file will be added. For example, if you added

resources\logo.svg, the file would be deployed to the target (e.g.d:\WebApps\MyApp\resources\logo.svg) - Files in the target directory that are different from the artifacts file will be replaced. For example, if you edited

styles\common.css(which would give a different file size or date), the new file would deployed to the target (e.g.d:\WebApps\MyApp\styles\common.css)

You can override this behavior by using two parameters:

TransferAll; setting totruewill cause all files to be copied, regardless of whether they've changed. This may make sense to do if your artifact has thousands of tiny files, and it would take more time to compare the files than simply transferring and overwriting them.DoNotClearTargetDirectory; setting this totruewill not delete files that aren't in the build artifacts file. This may make sense if you want to simply add files to a directory.

For example, if your build script created an artifacts file named ExtResources that contains files from a different repository and you simply want to add them on top of your existing resources, you could use this pattern:

Deploy-Artifact ExtResources

(

To: G:\WebApps\MyWebSite\resources\external,

DoNotClearTargetDirectory: True,

TransferAllFiles: True

);

Git Repositories & Last Modified Date

Git repositories do not store the Last Modified Date for files. This means that the last modified date will be set to whenever the files were checked out. This is typically when the build script was run.

This behavior makes the incremental deployment approach effectively meaningless, as all files will always show as changed. To work around this behavior in Git, you can set the PreserveLastModified parameter on the Git::Checkout-Code operation:

Git::Checkout-Code

(

PreserveLastModified: true

);

When set, the operation will search for a commit associated with each file and set the commit date as the file's last-modified-date. If you have a significant number of files and commits in your repository, this may take a lot of time.

Deploying as a Zip File

Instead of synchronizing the artifacts file with a target directory, you can deploy the entire artifacts file as a .zip file. This may be useful if you want to deploy desktop-based applications to a file share, or otherwise store the .zip file somewhere.

The value you set for the DeployAsZip parameter will control this behavior.

| Value | Behavior |

|---|---|

true |

deploy the file using the artifacts file name (e.g. Default.zip or WebApp.zip) |

«file-name.zip» |

deploy the file to the specified file name, which should end in .zip a .zip extension will be added if not specified |

false or not set |

use normal deploying behavior (i.e. don't deploy as .zip) |

| any other value | for example, including a directory name will result in an error |

For example, if you wanted to deploy a released desktop application to a shared drive, you might do something like this:

Deploy-Artifact

(

To: \\filesv1\DesktopApps\MyApp,

DeployAsZip: MyApp-$ReleaseNumber.zip

);

Deploying Artifacts from Other Applications or Builds

Your deploy scripts aren't limited to deploying artifacts associated with the current build. You can set different values on the sources tab of the build script or operation, which might be useful for a number of scenarios, including:

- deploying reusable components of a different application into your build, similar to how installing NuGet or npm packages might work

- having a controlling application that can orchestrate multiple deployments

For example, if there was another application in BuildMaster called ExtBamResources that primarily contained images, logos, and other resources that are shared by many applications, you could deploy that ExtBamResources's artifacts into the working directory in your build script, prior to creating an artifact for your application

Deploy-Artifact

(

Application: ExtBamResources,

ReleaseNumber: $ExtBamRelease,

Build: furthest,

To: src\app\res\bamresources,

);

Note how the ReleaseNumber and Build can be used to control the version of the build artifacts you wish to deploy.

Package and Container-based Artifacts

The build artifact model isn't a great fit for applications like NuGet packages, PyPI packages, or Docker containers. This is because packages and containers are not deployed as normal files:

- Pre-release content (i.e. a package or container image) is built with a specialized tool like

dotnet packordocker build - The content is published to a feed/repository like ProGet

- Later, the content is tested with a specialized tool like Visual Studio or

docker run - After testing, the package/image is repackaged and/or promoted

- The content is eventually repackaged into a release version

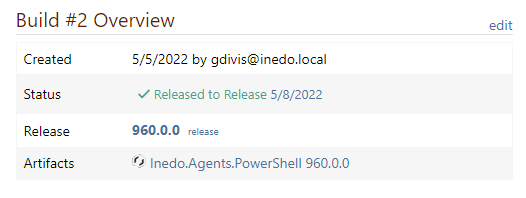

In this workflow, the feed/repository (e.g. ProGet) is responsible for storing/managing the content and there is no need for a build artifact file to be managed by BuildMaster. For example, a build of an application that creates NuGet packages might look like this:

To facilitate this workflow, you can add packages and container images to a build. Conceptually, this is similar to how artifact files work.

In the above image, clicking on the attached package name (i.e. Inedo.Agents.PowerShell) would navigate directly to the ProGet package.

The simplest way to add a package to a build is to use the Attach-Package operation:

Packages::Attach-Package

(

Name: Kramerica.CorpLib

Version: $ReleaseNumber.$BuildNumber

Source: feed::internal-nuget

);

This will then associate the package with the current build. The Containers::Attach-Container operation works in the same manner.